What you can do

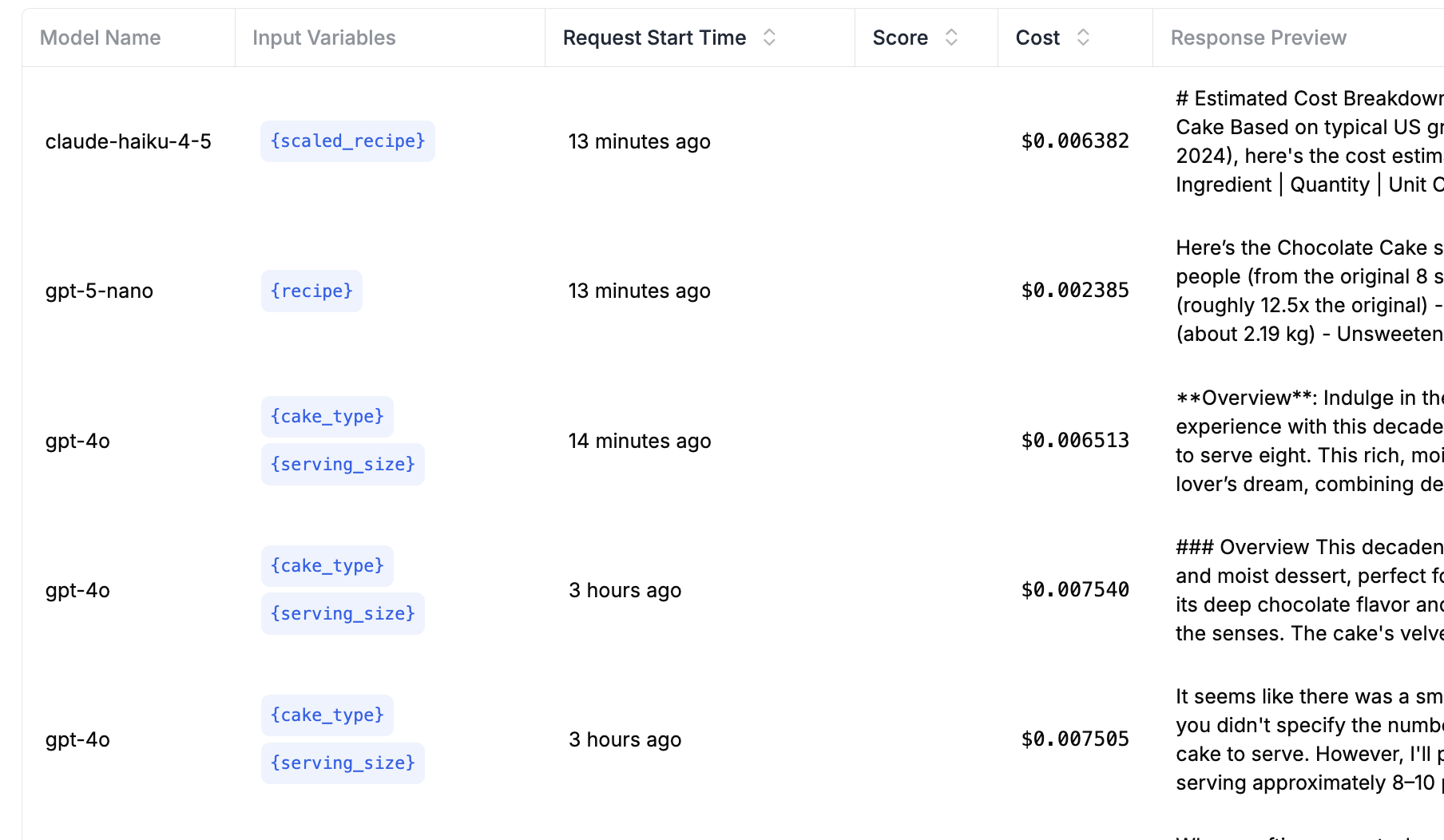

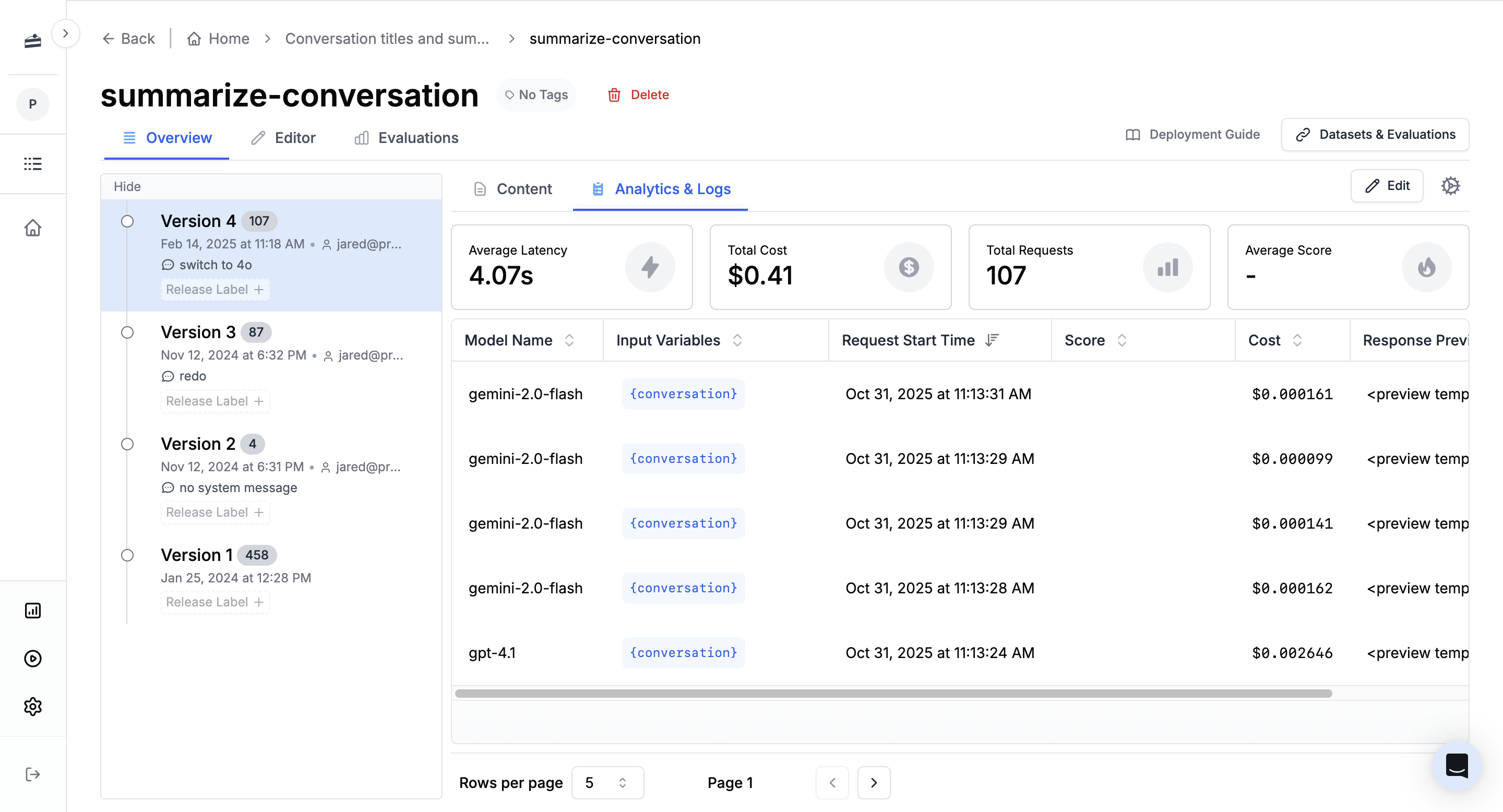

- Analyze application behavior with request logs and traces (spans, inputs, outputs, latency, cost, token usage, and more)

- Turn requests and traces into datasets for evaluations and regression tests.

- Review usage across workspaces, users, and environments.

How it fits together

- Log requests and traces with the PromptLayer SDK, REST API, custom logging, or OpenTelemetry.

- Add metadata, tags, scores, and prompt associations so you can find the right runs later.

- Use logs, traces, and analytics to understand application behavior across prompts, users, sessions, models, workflows, and environments.

- Use the same data to understand how your team uses PromptLayer.

- Convert useful history into datasets for evaluations and automated feedback loops.

Viewing logs

Click Logs in the sidebar to see request history. You can filter by prompt, search by content, inspect errors, and review request details.

Next steps

Analytics

Track cost, latency, request volume, token usage, models, prompts, tags, and metadata.

Traces

Inspect span hierarchies, timing, inputs, outputs, errors, and linked request logs.

Advanced Search

Find logs by request content, metadata, tags, scores, status, model, prompt, and usage fields.

Create Datasets from History

Build evaluation datasets from filtered request history and production examples.

Advanced Logging

Add request IDs, metadata, tags, scores, prompt associations, and custom logs from code.