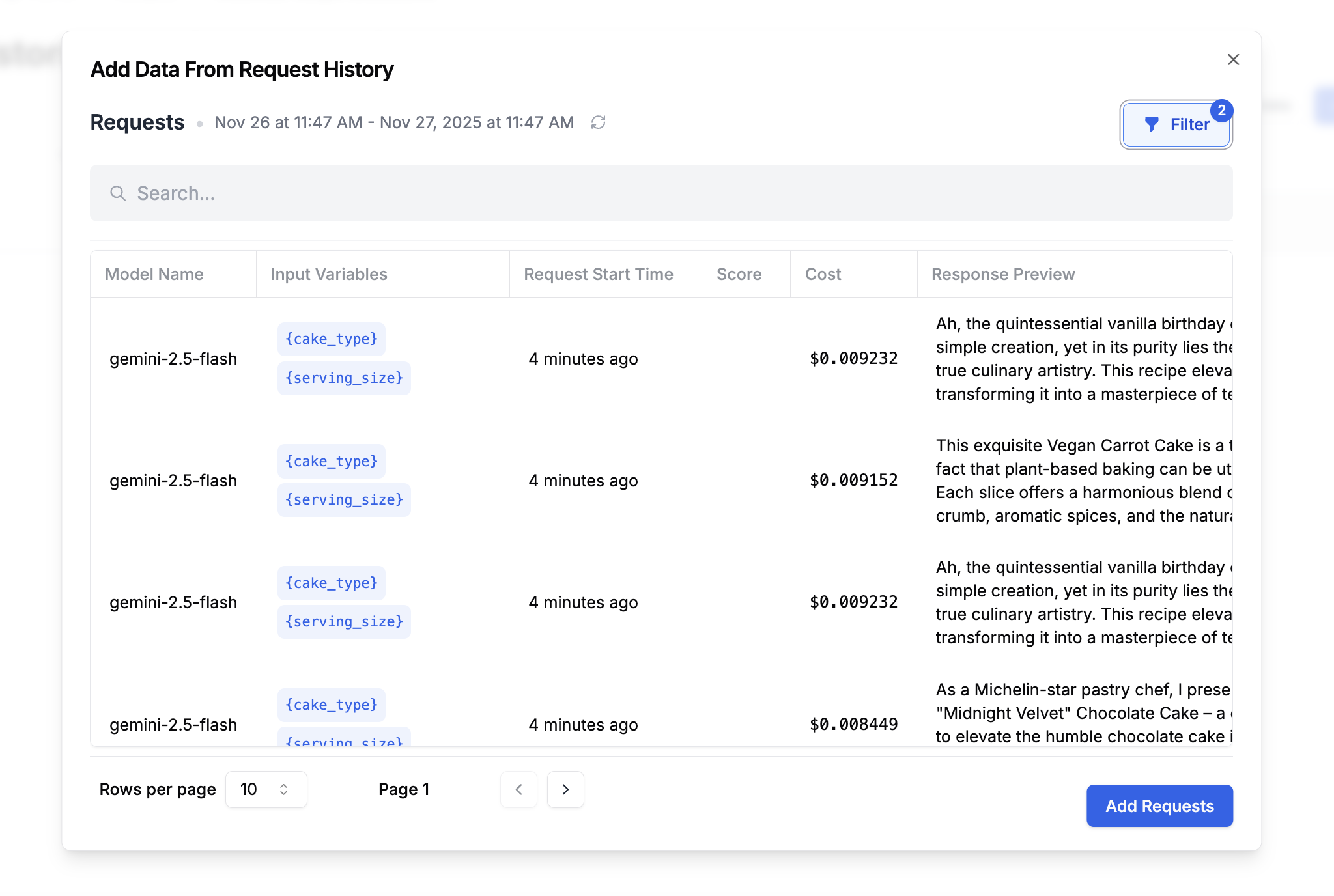

Create a historical dataset

Go to Datasets and click Add from Request History. This opens a request log browser where you can filter and select requests.

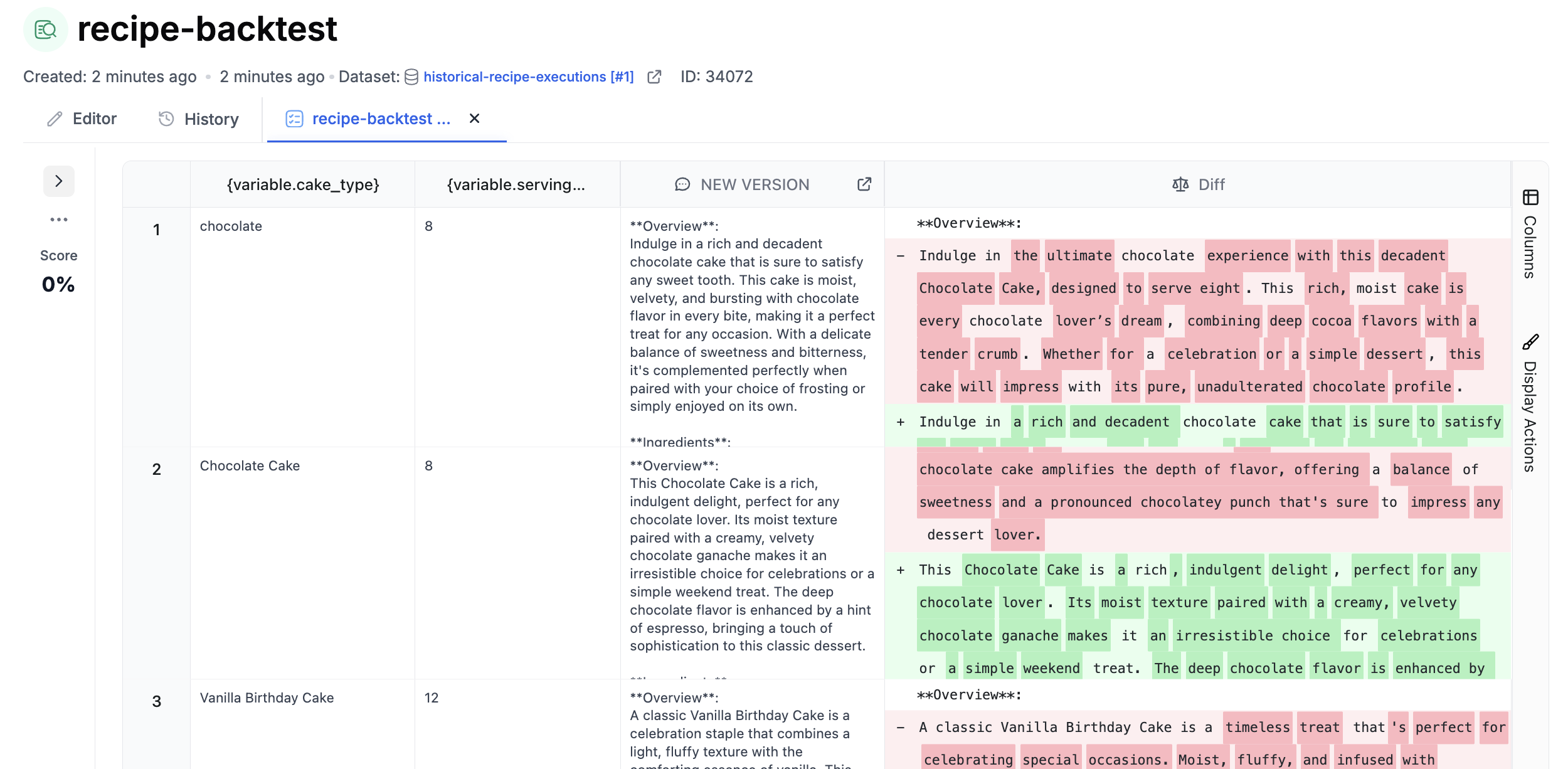

Run a backtest

Create an evaluation that runs your new prompt version against the historical dataset. Add columns for:- New prompt output: The response from your updated prompt version

- Comparison: An equality comparison, semantic similarity check, LLM-as-judge score, or human review column